- 강의 질문

- AI TECH

HuggingFace-VLLM을 활용한 Local Model Inference 실습 관련 질문

안녕하세요.

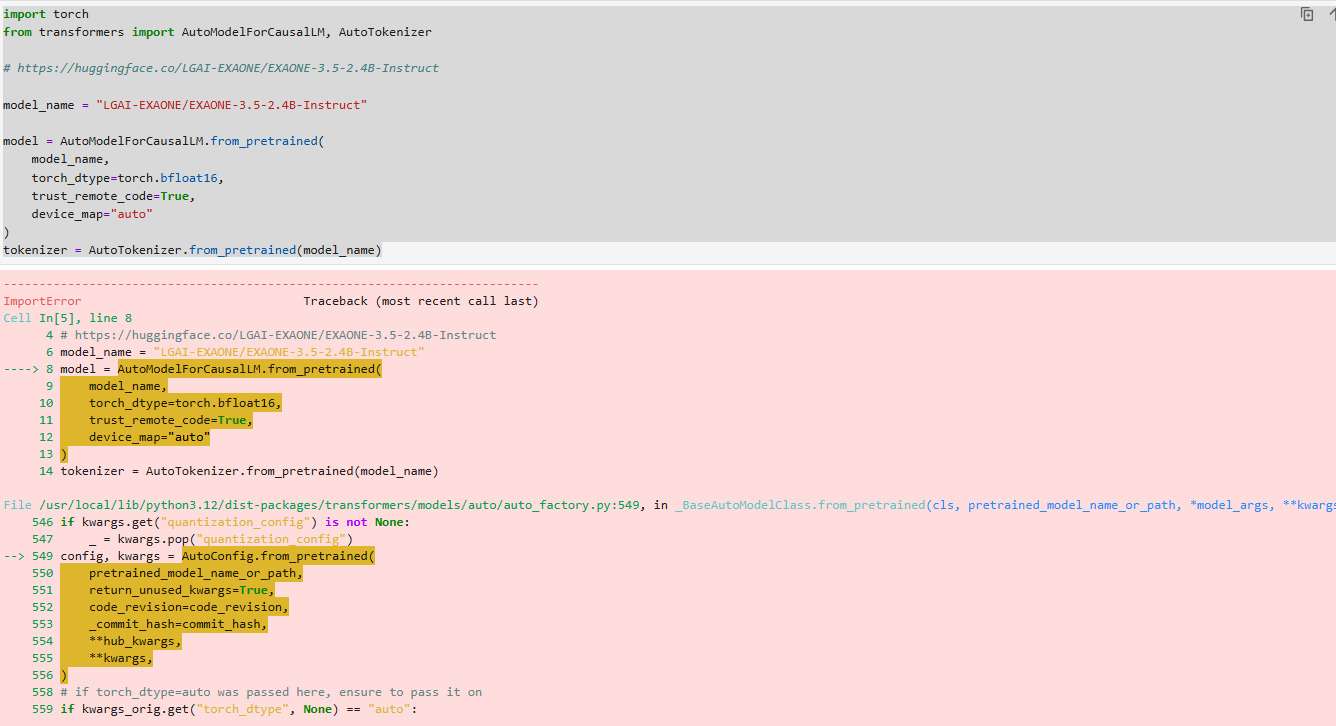

해당 강의 실습 하는 과정에서 아래와 같은 오류가 표시되는데 어떻게 처리를 해야할까요?ㅠㅠ

상세 오류 내용은 아래와 같습니다.

---------------------------------------------------------------------------

ImportError Traceback (most recent call last)

Cell In[5], line 8

4 # https://huggingface.co/LGAI-EXAONE/EXAONE-3.5-2.4B-Instruct

6 model_name = "LGAI-EXAONE/EXAONE-3.5-2.4B-Instruct"

----> 8 model = AutoModelForCausalLM.from_pretrained(

9 model_name,

10 torch_dtype=torch.bfloat16,

11 trust_remote_code=True,

12 device_map="auto"

13 )

14 tokenizer = AutoTokenizer.from_pretrained(model_name)

File /usr/local/lib/python3.12/dist-packages/transformers/models/auto/auto_factory.py:549, in _BaseAutoModelClass.from_pretrained(cls, pretrained_model_name_or_path, *model_args, **kwargs)

546 if kwargs.get("quantization_config") is not None:

547 _ = kwargs.pop("quantization_config")

--> 549 config, kwargs = AutoConfig.from_pretrained(

550 pretrained_model_name_or_path,

551 return_unused_kwargs=True,

552 code_revision=code_revision,

553 _commit_hash=commit_hash,

554 **hub_kwargs,

555 **kwargs,

556 )

558 # if torch_dtype=auto was passed here, ensure to pass it on

559 if kwargs_orig.get("torch_dtype", None) == "auto":

File /usr/local/lib/python3.12/dist-packages/transformers/models/auto/configuration_auto.py:1346, in AutoConfig.from_pretrained(cls, pretrained_model_name_or_path, **kwargs)

1341 trust_remote_code = resolve_trust_remote_code(

1342 trust_remote_code, pretrained_model_name_or_path, has_local_code, has_remote_code, upstream_repo

1343 )

1345 if has_remote_code and trust_remote_code:

-> 1346 config_class = get_class_from_dynamic_module(

1347 class_ref, pretrained_model_name_or_path, code_revision=code_revision, **kwargs

1348 )

1349 config_class.register_for_auto_class()

1350 return config_class.from_pretrained(pretrained_model_name_or_path, **kwargs)

File /usr/local/lib/python3.12/dist-packages/transformers/dynamic_module_utils.py:616, in get_class_from_dynamic_module(class_reference, pretrained_model_name_or_path, cache_dir, force_download, resume_download, proxies, token, revision, local_files_only, repo_type, code_revision, **kwargs)

603 # And lastly we get the class inside our newly created module

604 final_module = get_cached_module_file(

605 repo_id,

606 module_file + ".py",

(...) 614 repo_type=repo_type,

615 )

--> 616 return get_class_in_module(class_name, final_module, force_reload=force_download)

File /usr/local/lib/python3.12/dist-packages/transformers/dynamic_module_utils.py:311, in get_class_in_module(class_name, module_path, force_reload)

309 # reload in both cases, unless the module is already imported and the hash hits

310 if getattr(module, "__transformers_module_hash__", "") != module_hash:

--> 311 module_spec.loader.exec_module(module)

312 module.__transformers_module_hash__ = module_hash

313 return getattr(module, class_name)

File <frozen importlib._bootstrap_external>:995, in exec_module(self, module)

File <frozen importlib._bootstrap>:488, in _call_with_frames_removed(f, *args, **kwds)

File /workspace/.cache/huggingface/modules/transformers_modules/LGAI_hyphen_EXAONE/EXAONE_hyphen_3_dot_5_hyphen_2_dot_4B_hyphen_Instruct/ccce25bd39c141fe053e0bc75818a8f5fe962802/configuration_exaone.py:24

21 """LG AI Research EXAONE Lab"""

23 from transformers.configuration_utils import PretrainedConfig

---> 24 from transformers.modeling_rope_utils import RopeParameters

27 class ExaoneConfig(PretrainedConfig):

28 r"""

29 This is the configuration class to store the configuration of a [`ExaoneModel`]. It is used to

30 instantiate a EXAONE model according to the specified arguments, defining the model architecture. Instantiating a

(...) 135 >>> configuration = model.config

136 ```"""

ImportError: cannot import name 'RopeParameters' from 'transformers.modeling_rope_utils' (/usr/local/lib/python3.12/dist-packages/transformers/modeling_rope_utils.py)

감사합니다.